After close to 6 years with SGH Capital, I’m pleased to announce I’ve joined OSS Ventures to scale up their VC arm and operations. I’ll be forever grateful for my time at SGH: from Entrepreneur in Residence to Partner, the learning curve has been incredible, and it now felt like the right time to hone in on what I like and understand best – B2B SaaS.

Founded in 2018, OSS is a hyper-focused venture builder and investor tackling the future of operations and manufacturing. Even though the industrial sector (including construction) represents ~27% of the world’s GDP1, it only attracts ~3% of VC funding2!

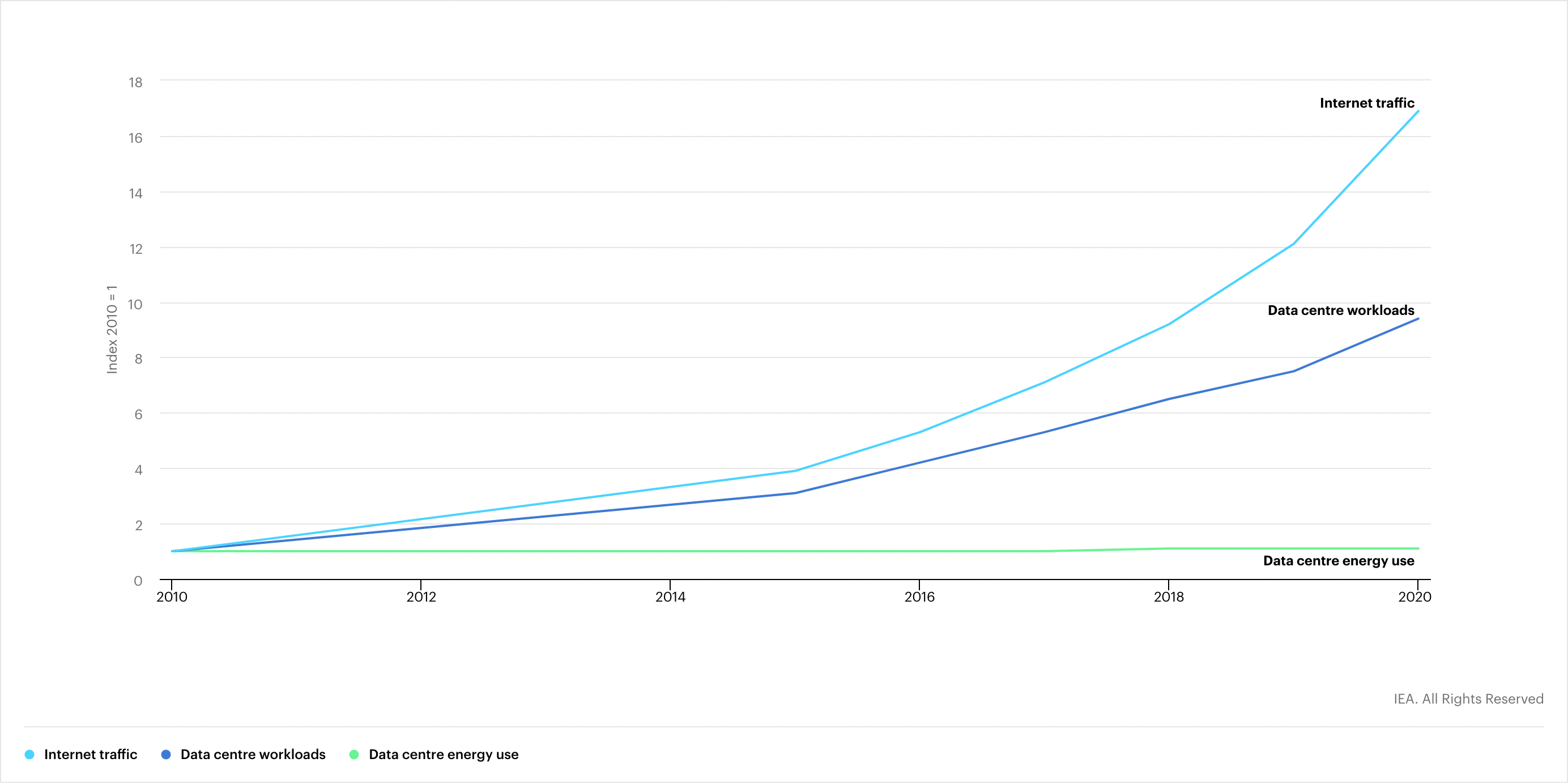

Climate change, geopolitical tensions, the energy crisis, inflation and supply-chain woes have magnified the weaknesses of our production and consumption models. To turn the tide, world leaders are undertaking massive investments, such as the Inflation Reduction Act, the CHIPS Act, or the Critical Raw Materials Act. Meanwhile, swaths of VCs are fighting for the hottest artificial intelligence deals but our factories and critical infrastructure rely on equipment that won’t be upgraded for another decade or two.

OSS leverages a wide network of industrial partners to build and invest in the modern factory stack. In the last years, we have incubated and funded a dozen startups, whose solutions are used in over 1.000 factories. Our companies help manufacturers unlock tangible operational efficiencies and compound the know-how of their employees. These solutions fill information gaps, replace paper forms, disjointed spreadsheets and antiquated software so that not just white-collar but also blue-collar and deskless workers can work smarter instead of harder.

OSS also invests ahead of or alongside top-tier VCs in extraordinary founders who want to build enduring businesses in that field in the US and Europe. We partner from pre-seed through Series B, and our hands-on operating partners help entrepreneurs scale their teams and revenues and set them up for success.

We are absolutely convinced that the next crop of billion-dollar companies will include several industrial SaaS platforms because that’s what our portfolio growth and numbers hint at.

If you are working on something in that space, please reach out!

]]>